You all certainly heard about EMR, Dabricks, Dataflow, DynamoDB, BigQuery or Cosmos DB. Those are well known data services of AWS, Azure and GCP, but besides them, cloud providers offer some - often lesser known - services to consider in data projects. Let's see some of them in this blog post!

What would it take for you to trust your Databricks pipelines in production?

A 3-day bug hunt on a 3-person team costs up to €7,200 in lost engineering time. This workshop teaches you to prevent that — unit tests, data tests, and integration tests for PySpark and Databricks Lakeflow, including Spark Declarative Pipelines.

Konieczny

Data discovery

A data catalog is a data assets inventory that stores the datasets' metadata, such as their schemas, types, or labels. Populating a data catalog may then be manual. Hopefully, you don't have to write a data discovery pipeline if you are on AWS because this cloud provider offers a data crawler.

Data crawler is a part of AWS Glue service. It supports automatic discovery of AWS data stores (S3, DynamoDB, Redshift, Aurora, DocumentDB) and the JDBC-accessible databases (MariaDB, SQL Server, MySQL, Oracle, PostgreSQL). The crawler can read various file formats, including semi-structured formats like JSON, and infer their schema. The crawling job can run at a scheduled interval, or on-demand.

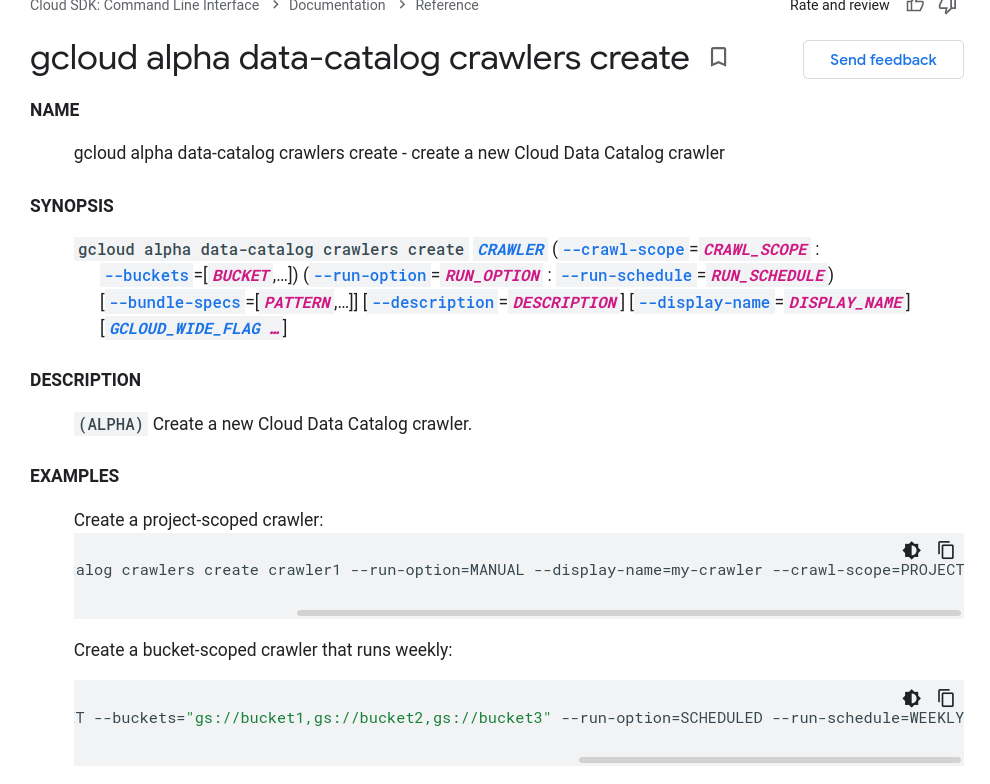

A crawler is also available in Azure Purview. When you define a new data source to integrate into the catalog, you can also choose a scan trigger and run it weekly, monthly, or only once. When it comes to GCP, I found the crawler traces only in the alpha SDK but no mention in the official documentation:

Visual data wrangling

The second type of less popular cloud data services are visual data preparation tools. Once again, you will find it in AWS Glue with Data Brew component. It helps clean and normalize the data with 200+ data cleansing functions and discover and understand it with the data profiling and data lineage features. Data Brew also enables transforming the manually built data transformation logic into a regularly scheduled job.

But Data Brew is not the first cloud data preparation UI-based service I've ever heard about. I discovered this feature first with GCP's DataPrep. It has similar features to Data Brew because it supports data preparation, profiling, and scheduling of the data preparation jobs backed by Dataflow or BigQuery.

I didn't find a similar service on Azure. The most similar feature to Data Brew and DataPrep is Power Query from Data Factory. This activity is in fact, a code-free data preparation where the service translates the visually defined data transformation rules to a data workflow.

Real-time ad-hoc querying

Although Azure doesn't have a pure data preparation service, it has a dedicated real-time ad-hoc querying service called Data Explorer. The service supports analyzing data from streaming data sources like Event Hub and Event Grid. It also supports querying data ingested directly from Data Factory workflows. All this with a SQL-like language called Kusto.

To query real-time data on AWS you can use the SQL version of Kinesis Data Analytics. I didn't find a corresponding service on GCP. Although Pub/Sub's "View message" feature lets you visualize the incoming messages, it doesn't have any querying capabilities.

Database migrations

The next service you may not use very often is a service for database migration. It's present on all of the 3 analyzed cloud providers but not always with the same features. AWS Database Migration Service supports offline and online migrations between homogeneous and heterogeneous data sources. You can, for example, use the service to migrate Oracle to MySQL. In addition to the data migration, the service has a Schema Conversion Tool that you can use to map the schemas before starting the migration.

Azure Database Migration Service also works with offline and online (continuous migration) modes. Unlike AWS' service, it will work pretty well with homogenous data sources and migrate an Azure DB for MySQL to AWS RDS MySQL, but not to a different database.

When it comes to the GCP Database Migration Service, it's limited to MySQL and PostgreSQL migrations to Cloud SQL databases.

Managed data lake

And to terminate, let's see how to manage data lakes on the cloud. On AWS you can use the Lake Formation service. Internally, it's composed of other AWS services to securely store (S3, IAM), discover (Glue Data Catalog), and transform (Glue Jobs and Crawlers) the data.

A similar but not the same service has GCP. It's called Dataplex and unlike Lake Formation, it's not advertised as a managed data lake. It's advertised as a unified data management platform for data lakes and data warehouses. The service also integrates with other GCP components like Dataflow and Data Fusion for data lifecycle management (transformation, archival), or Google's AI and ML for automated data discovery and data classification.

In this article, I wanted to share several IMO data services, less popular than NoSQL data stores, serverless functions, or managed Apache Spark environments. I hope you learned something new about them and saw that data engineering is not only about writing data processing jobs!

Data Engineering Design Patterns

Looking for a book that defines and solves most common data engineering problems? I wrote

one on that topic! You can read it online

on the O'Reilly platform,

or get a print copy on Amazon.

I also help solve your data engineering problems contact@waitingforcode.com 📩

Read also about Less known data services on the cloud here:

- GCP DataPlex AWS Lake Formation: How It Works AWS Database Migration Service Step-by-Step Walkthroughs Status of migration scenarios supported by Azure Database Migration Service Overview of Database Migration Service gcloud alpha data-catalog crawlers create

Related blog posts:

- What's new on the cloud for data engineers - part 12 (10.2023-02.2024)

- Vertical autoscaling for data processing on the cloud

- What's new on the cloud for data engineers - part 11 (06-09.2023)

You certainly know data processing or data storage cloud services. But what about the others? I presented some of them in the new blog post ? https://t.co/JvCCLUOwFe

— Bartosz Konieczny (@waitingforcode) October 24, 2021